How big can a production CMS server get?

The short answer is as big as your blank check allows.

As you add users, workflow, translation, heavy publishing, and lots of opening/changing components, the CMS server size will inevitably increase.

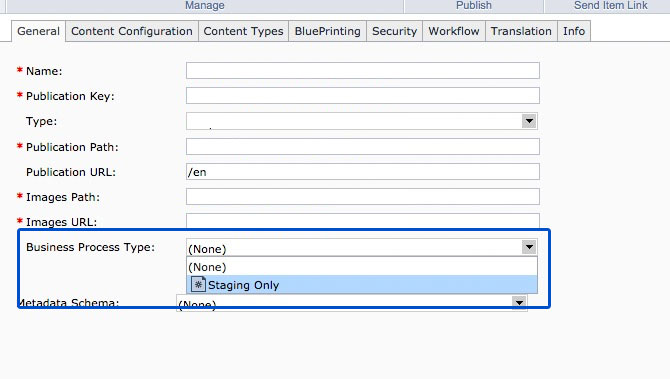

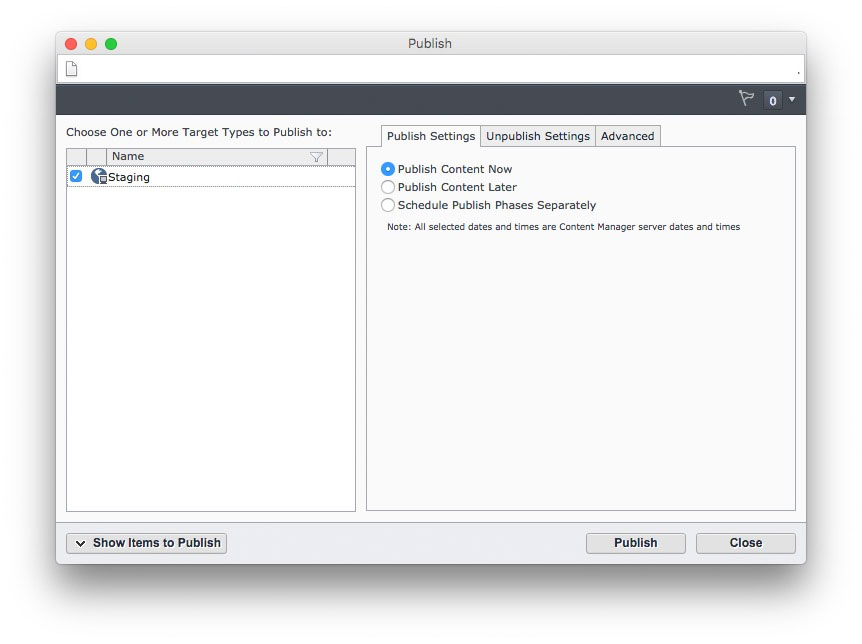

Often times, multiple CM services are all enabled on one large machine for the enterprise, but the downsides of that are when one problem happens, all aspects of your CMS fail with it. Below I show what an infrastructure looks like that handles very large publishing loads and is scalable to add more load. The CMS also handles workflow services and translation integration with World Server.